Other Providers

The built-in chat UI supports OpenAI API, Mistral, OpenRouter, and custom OpenAI-compatible providers.

Pay-as-you-go Pricing

Most of these options (besides LM Studio) use pay-as-you-go pricing which can incur cost quickly with advanced models and long conversations. Monitor your API key usage.

Setup Steps

- Download Producer_Pal.amxd and drag it to a MIDI track in Ableton Live

- In the Producer Pal device, click "Open Chat UI"

- Configure your provider as described below

- Click "Quick Connect" and say "connect to ableton"

Available Providers

OpenRouter

OpenRouter is an "AI gateway" with hundreds of LLMs in one place. Includes free and pay-as-you-go options.

- Get an OpenRouter API key

- In the chat UI settings:

- Provider: OpenRouter

- API Key: Your key

- Model: e.g.,

anthropic/claude-sonnet-4,google/gemini-2.5-pro

Mistral

Mistral offers AI models developed in France. Free tier available with fairly aggressive quotas.

- Get a Mistral API key

- In the chat UI settings:

- Provider: Mistral

- API Key: Your key

- Model: e.g.,

mistral-large-latest

OpenAI API

OpenAI offers GPT models with pay-as-you-go pricing. For detailed setup, see the dedicated OpenAI guide.

- Get an OpenAI API key

- In the chat UI settings:

- Provider: OpenAI

- API Key: Your key

- Model: e.g.,

gpt-5.2

Subscription Alternative

Prefer flat-rate pricing? The Codex App or Codex CLI work with OpenAI's subscription plans.

Custom Providers

For other OpenAI-compatible providers:

- In the chat UI settings:

- Provider: Custom (OpenAI-compatible)

- API Key: Your provider's key

- URL: Your provider's API endpoint

- Model: The model name

Example: Groq

- Provider: Custom (OpenAI-compatible)

- URL:

https://api.groq.com/openai/v1 - Model:

llama-3.3-70b-versatile

Privacy Note

Your API key is stored in browser local storage. Use a private browser session if that concerns you, or delete the key from settings after use.

LM Studio API

For free locally running models, you can use LM Studio with the built-in chat UI instead of LM Studio's native UI.

Install LM Studio and download a model that supports tools

Go to the LM Studio developer tab

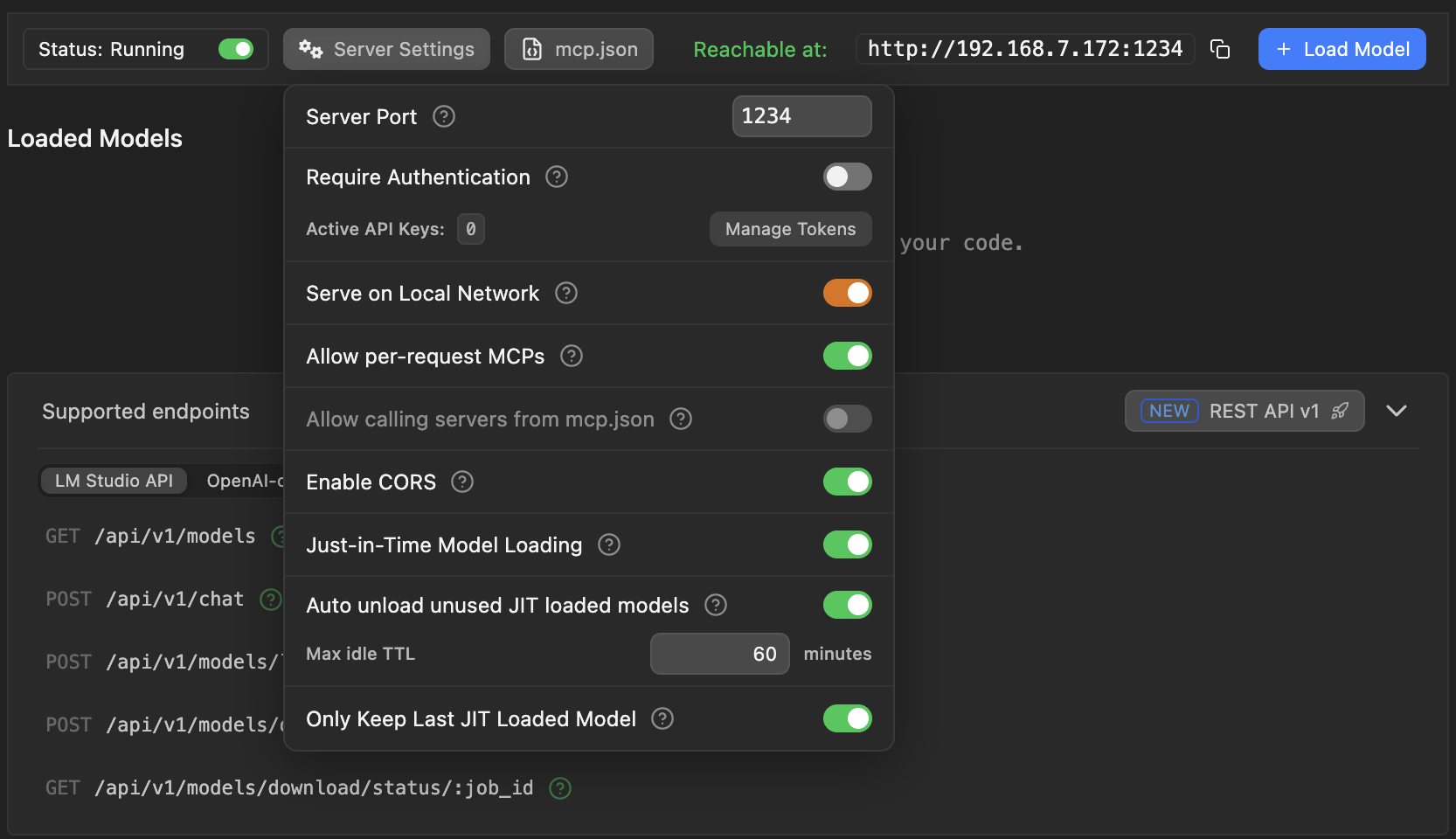

Open Server Settings and configure:

- Server Port - Should be

1234(if different, adjust the URL in your Producer Pal connection settings to match) - Enable CORS - Required for browser access

- Serve on Local Network - Enable this if running LM Studio on a different computer (allows other devices to connect)

- Server Port - Should be

Start the LM Studio server (should say "Status: Running")

In the Producer Pal Chat UI settings:

- Provider: LM Studio (local)

- URL: Copy from LM Studio's "Reachable at:" field

- Default when everything runs on the same computer:

http://localhost:1234 - When "Serve on Local Network" is enabled, use the network address shown (e.g.,

http://192.168.7.172:1234)

- Default when everything runs on the same computer:

- Model: A model that supports tools, such as

qwen/qwen3.5-9b,google/gemma-4-e4b,mistralai/devstral-small-2-2512, orzai-org/glm-4.7-flash

Save and click "Quick Connect"

Model Tool Support

If the model responds with garbled text like <|tool_call_start|>... or says it can't connect to Ableton, the model doesn't support tools. Look for the hammer icon next to models:

![]()

Small Model Mode

Enable "Small Model Mode" in the Producer Pal Setup tab for better compatibility with local models. See LM Studio tips for more optimization advice.

Troubleshooting

If the built-in chat doesn't work, see the Troubleshooting Guide.